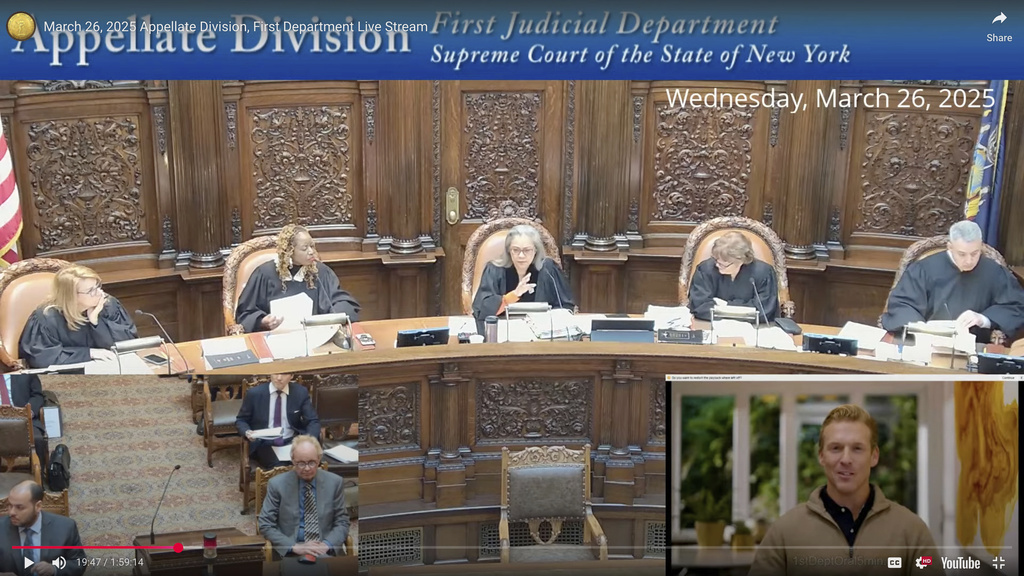

It took solely seconds for the judges on a New York appeals courtroom to appreciate that the person addressing them from a video display — an individual about to current an argument in a lawsuit — not solely had no legislation diploma, however did not exist in any respect.

The newest weird chapter within the awkward arrival of synthetic intelligence within the authorized world unfolded March 26 beneath the stained-glass dome of New York State Supreme Court docket Appellate Division’s First Judicial Division, the place a panel of judges was set to listen to from Jerome Dewald, a plaintiff in an employment dispute.

“The appellant has submitted a video for his argument,” said Justice Sallie Manzanet-Daniels. “Ok. We will hear that video now.”

On the video display appeared a smiling, youthful-looking man with a sculpted hairdo, button-down shirt and sweater.

“May it please the court,” the person started. “I come right here at present a humble professional se earlier than a panel of 5 distinguished justices.”

“Ok, hold on,” Manzanet-Daniels stated. “Is that counsel for the case?”

“I generated that. That’s not a real person,” Dewald answered.

It was, the truth is, an avatar generated by synthetic intelligence. The decide was not happy.

“It would have been nice to know that when you made your application. You did not tell me that sir,” Manzanet-Daniels stated earlier than yelling throughout the room for the video to be shut off.

“I don’t appreciate being misled,” she stated earlier than letting Dewald proceed along with his argument.

Dewald later penned an apology to the courtroom, saying he hadn’t supposed any hurt. He did not have a lawyer representing him within the lawsuit, so he needed to current his authorized arguments himself. And he felt the avatar would be capable of ship the presentation with out his personal ordinary mumbling, stumbling and tripping over phrases.

In an interview with The Related Press, Dewald stated he utilized to the courtroom for permission to play a prerecorded video, then used a product created by a San Francisco tech firm to create the avatar. Initially, he tried to generate a digital reproduction that appeared like him, however he was unable to perform that earlier than the listening to.

“The court was really upset about it,” Dewald conceded. “They chewed me up pretty good.”

Even actual legal professionals have gotten into bother when their use of synthetic intelligence went awry.

In June 2023, two attorneys and a legislation agency had been every fined $5,000 by a federal decide in New York after they used an AI device to do authorized analysis, and consequently wound up citing fictitious authorized circumstances made up by the chatbot. The agency concerned stated it had made a “good faith mistake” in failing to know that synthetic intelligence may make issues up.

Later that 12 months, extra fictious courtroom rulings invented by AI had been cited in authorized papers filed by legal professionals for Michael Cohen, a former private lawyer for President Donald Trump. Cohen took the blame, saying he did not notice that the Google device he was utilizing for authorized analysis was additionally able to so-called AI hallucinations.

These had been errors, however Arizona’s Supreme Court docket final month deliberately started utilizing two AI-generated avatars, just like the one which Dewald utilized in New York, to summarize courtroom rulings for the general public.

Daniel Shin, an adjunct professor and assistant director of analysis on the Middle for Authorized and Court docket Expertise at William & Mary Regulation College, stated he wasn’t shocked to be taught of Dewald’s introduction of a pretend particular person to argue an appeals case in a New York courtroom.

“From my perspective, it was inevitable,” he stated.

He stated it was unlikely {that a} lawyer would do such a factor due to custom and courtroom guidelines and since they may very well be disbarred. However he stated people who seem with out a lawyer and request permission to deal with the courtroom are often not given directions concerning the dangers of utilizing a synthetically produced video to current their case.

Dewald stated he tries to maintain up with expertise, having lately listened to a webinar sponsored by the American Bar Affiliation that mentioned using AI within the authorized world.

As for Dewald’s case, it was nonetheless pending earlier than the appeals courtroom as of Thursday.