Regulation enforcement officers in New Jersey have introduced ahead a lawsuit in opposition to the messaging app Discord, citing claims that the makers of the software program “misled parents about the efficiency of its safety controls and obscured the risks children faces while using the application.”

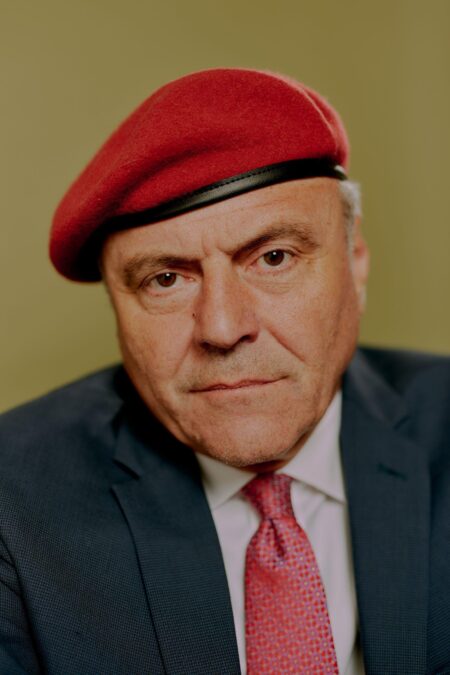

In a press release, New Jersey Legal professional Normal Matthew Platkin claimed the makers of the appliance have violated the states client safety legal guidelines and have “exposed New Jersey children to sexual and violent content, leaving them vulnerable to online predators lurking on the Discord app.”

“Discord markets itself as a safe space for children, despite being fully aware that the application’s misleading safety settings and lax oversight has made it a prime hunting ground for online predators seeking easy access to children,” mentioned Platkin in a press release on the lawsuit. “These deceptive claims regarding its safety settings have allowed Discord to attract a growing number of children to use its application, where they are at risk. We intend to put a stop to this unlawful conduct and hold Discord accountable for the harm it has caused our children.”

The criticism, that was filed on Thursday, alleges Discord engaged in a number of violations of the New Jersey Client Fraud Act.

Discord ‘couldn’t and didn’t’ defend younger customers

“Discord knew its safety features and policies could not and did not protect its youthful user base, but refused to do better,” the criticism alleges.

Specifically, Platkin’s workplace claimed Discord — an app that permits customers to share messages, photographs and movies — misled mother and father and children about its security settings for direct messages.

In response to courtroom paperwork, Platkin argues {that a} year-long investigation revealed that, though Discord has represented its app as secure and has touted its Secure Direct Messaging function and its successors — which it claimed to mechanically scan and delete personal direct messages that contained specific media content material — the app did not stay as much as these claims.

Lawsuit claims youngster customers ‘inundated with specific content material’

Amongst points that Platkin’s workplace cited as points with the app, officers mentioned Discord affords customized emojis, stickers, and soundboard results, meant to make chats extra participating and kid-friendly.

And, Platkin’s workplace notes, it has created or facilitated “student hubs” in addition to communities centered on fashionable children’ video games, like Roblox.

However, officers mentioned predators can use these options and communities to make on-line “friends,” with underage customers. Then, Platkin’s workplace mentioned, “child users can be—and are—inundated with explicit content.”

Platkin’s workplace additionally cited points with the app’s “Safe Direct Messaging” function, claiming that regardless of Discord’s illustration that the function scans and deletes messages with specific content material, not all of such a content material was being detected or deleted.

“Simply put, Discord has promised parents safety while simultaneously making deliberate choices about its app’s design and default settings, including Safe Direct Messaging and age verification systems, that broke those promises,” Platkin’s workplace mentioned in a press release on the lawsuit. “As a result of Discord’s decisions, thousands of users were misled into signing up, believing they or their children would be safe, when they were really anything but.”

The lawsuit, at famous by Platkin’s workplace, seeks a variety of cures, together with an injunction to cease Discord from violating New Jersey’s Client Fraud Act, civil penalties, and requiring the corporate to surrender any income generated in New Jersey.

Discord representatives didn’t instantly reply to requests for remark that had been made by NBC10 following Thursday’s announcement.